THIS STUFF MATTERS: A lot of people play around with “op amp rolling” but they mostly lack the ability to measure the results. A lot of op amps get “upgraded” to much more expensive parts usually with zero real world benefit. Worse, these usually faster parts can easily be unstable in a circuit designed for a much slower part. Some of the differences heard could be high frequency instability. But when someone “upgrades” to an expensive op amp with high expectations many assume the different sound must be better sound. This article is all about measuring the results and putting some facts around the differences between op amps in a typical audio application.

REALITY CHECK: This isn’t a comprehensive comparison. It’s mostly a side story to my research and development of the O2 Headphone Amp. So please don’t expect a technical paper worthy of the Audio Engineering Society. A complete op amp survey is at least several full weeks worth of work. For an example of a more ambitious effort, look up Samuel Groner’s “Operational Amplifier Distortion” published in 2009. It’s a 436 page monumental effort in the form of a huge 35 MB PDF (which is why I’m not linking to it here). I’ve also referenced the work of Douglas Self who has run many op amps through an Audio Precision SYS-2700 series audio analyzer in various configurations.

LIMITED SCOPE: The O2 headphone amp uses DIY-friendly DIP8 dual op amps. It also requires op amps that can operate comfortably at +/- 12 volts (24 volts total). This rules out single devices, surface mount packages, and lower voltage op amps. While I did adjust the compensation and feedback impedance with some op amps, ultimately, the measurements are all in the same circuit configuration unless otherwise noted.

MOUSER’s SELECTION: One of the design goals of the O2 was to purchase as many parts as possible from a single vendor and, for some good reasons, that turned out to be Mouser Electronics. Mouser offers Ti, Burr Brown, JRC/NJR, On Semi and others, but they don’t offer National, Analog Devices or Linear Technology. So most of my evaluation was limited to what Mouser stocks. But I did test a couple parts from National and Analog Devices.

BLIND TESTING CHALLENGE: For those of you who believe there’s more to op amp sound than just the measurements, please read the Op Amp Myths article if you haven’t already. And if you’re still a skeptic, let’s schedule your YouTube video appearance with an ABX comparator, the op amp of your choice, and a $0.60 NE5532 using two O2 boards and the op amps in the gain stage. If you can tell them apart, I’ll give $500 to the charity of your choice. If you can’t tell them apart, you donate $500 to the charity of my choice. The challenging op amp’s measurements must at least meet the minimum criteria outlined in the O2 Design Principals. The test would be administered by an independent third party (I won’t even be present). Win or lose the video goes on YouTube and the story is published on this blog. And if you think the O2 is the weak link, please see: O2 Open Challenge

BOTTOM LINE: For those wanting to skip the Tech Section, the conclusions can be summed up as follows:

- At gains less than 4X nothing overall could beat the $0.39 NJM2068 in the O2’s gain stage. This is especially true if you’re concerned about power consumption for battery operation.

- At gains of 5X and higher nothing could beat the $0.65 NE5532 except by a few dB in noise performance.

- The older TL072, despite being used in thousands of audio devices, is way out of its league against newer op amps. It’s no longer even in the game in a low noise application like the O2.

- For the output stage, the NJM4556 was untouchable among compatible DIP8 dual op amps.

TECH SECTION

CONSTRAINTS: The testing was mostly limited to op amps suitable for the gain stage of the O2. See the O2 Design Process article for more.

APPLICATIONS: Most of this article is written from the perspective of using op amps for headphone and line level audio applications. I’m excluding high gain applications like microphone preamps, phono preamps, instrumentation equipment, etc. Used in high gain critical applications the rules can be different.

OP AMP SPECS: When you start “screening” op amps for a project you have to look at packages (DIP, SOIC, etc.) and decide if you want a single, dual, or quad part. Beyond that it mostly comes down to the specs and here are the highlights:

Intended Application – The semiconductor companies actually do know what they’re doing. They know what parameters are most important for audio applications and most design op amps with audio specifically in mind. It’s best to use those parts designed for audio, not those optimized for say video use. This seems obvious enough but several audio designers break this rule like the OPA690 in the AMB Mini3 which isn’t even specified at audio frequencies and has rather horrible specs for audio. Anyone who thinks they know more about op amp applications than the guys designing the op amps is very likely mistaken. It’s like putting tractor tires on your sports car thinking they’ll improve the traction. Stick to the tires made for sports cars.

- Operating Voltage – The O2 op amps need at least a 24 volt (+/- 12) power supply. This rules out a lot of the newest parts that are designed for low voltage portable use. Also note some op amps are optimized for single supply use and, while they’ll usually work with dual supplies, they may not be an optimal choice.

- Unity Gain Stable – There are some “un-compensated” op amps available that are not unity gain stable. They specify the minimum gain they can be used at. These op amps are generally best left to those who understand the complex math involved with calculating things like phase margin and who have the test equipment to verify the result (RMAA and low bandwidth scopes don’t qualify). Otherwise, stick with Unity Gain Stable compensated op amps.

- Package – The O2 requires a dual op amp in a DIP8 package. Op amps also come in single and quad versions, various surface mount packages, round metal TO99 “cans”, and some high power packages for higher thermal dissipation.

Single or Dual – The crosstalk between sections of dual op amps designed for audio use is typically better than –105 dB. That’s vastly better than the rest of a headphone amp can manage so it’s not an issue. There’s no need to use a single op amp for each channel. The 3 contact plug on almost every pair of headphones creates more crosstalk all by itself. Some claim thermal coupling between channels is a problem in dual parts, but the power consumption of a gain stage is usually dominated by the quiescent current which is relatively constant. So there won’t be significant thermal variations on the die due to the music signal. A dual op amp also has the advantage of providing better matching between the channels. So for cost savings, and to save board space, the choice is obvious—use a dual op amp.

- FET or Bipolar – You can broadly divide audio op amps into these two categories based on the type of transistors used in their input stage—FET or bipolar. Some claim various types of FET (JFET, Bi-FET, Di-FET, etc.) inputs “sound better” but they’re usually more noisy in real world use. The main reason to use FET inputs is for their superior DC performance (especially bias current) which is mostly useless in an audio application. The one exception being, if you’re going to hang a volume control right off the input, a FET op amp will generally put less DC through the volume control wiper. This helps reduce the “rustling” noise you get when you adjust the volume. It also can allow larger resistor values without excessive DC offset. But the O2 solves these issues in other ways so FETs are generally more of a liability if accuracy is the goal. The ultra high-end FET devices, like the Di-Fet OPA627, are an exception to the rule but the FET OPA2134 is not. It performs worse in some ways than the much cheaper bipolar 5532.

- Quiescent Current – In a battery powered device the quiescent (idle) current is a critical spec. Most of this current is for biasing the internal transistors. Some op amps need as much as 9 ma per amp section or more. While low power op amps typically have poor drive capability, higher noise, lower GBW, and poor slew rate. There are significant trade-offs between power and performance.

Gain BandWidth Product (GBW/GBWP/GBP) – The GBW helps indicate how much feedback will be available at higher frequencies and higher gains to correct distortion. But higher GBW usually means more power drain and possibly less stability. For low distortion at 20 Khz it should be at least 5 Mhz for G=1. Some manufactures specify the Unity Gain frequency instead which is the same as GBW with G=1. To compare GBW between different op amps it needs to be specified at the same gain—i.e. G=1, G=10, etc. Most manufactures provide a graph as well.

- Open Loop Voltage Gain (myth busted) – The open loop gain combines with the GBW above to determine how much feedback (NFB) is available across the power band. Generally, the higher the better and it should be at least 90 dB worst case. The best audio op amps are around 140 dB typical and it can make a big difference in the performance—especially at higher gains. Nearly all the audiophile myths about too much feedback have been proven false by lots of research—some of it relatively recent. Bruno Putzeys (a truly brilliant guy), in particular, has put most of the remaining persistent myths to their overdue death. May they rest in peace (but I’m sure they won’t). More on this below.

- Slew Rate – Here’s another huge myth: The faster the slew rate the better. But the fastest “slew” you can get from a 16/44 digital recording has a period of about 22 uS. So the O2, worst case, needs to slew 20 volts in 22 uS or a slew rate of 0.9 V/uS. And even considering those rare 24/96 recordings, the requirement is still 1.8 V/uS. SACD or 24/192, in theory, could require about 3.6 V/uS but, in practice, never will as that would be a 0 dBFS signal close to 100 Khz. Such a signal would never make it through the recording chain, and if it did, would fry tweeters and cause bats to fly into the side of your house. The peak levels in music generally fall as the frequency rises. This is especially true at ultrasonic frequencies. If you think I’m wrong, please reference a recording than can challenge even a 1.5 V/uS headphone amp. The industry rule of thumb, even on the recording side of the signal chain, is 0.2 V/uS per volt RMS of maximum output. I’ve doubled that just to be ultra conservative so 7*0.4 = 2.8 V/uS for the O2’s requirement. As I have explained elsewhere,slew rates well in excess of what’s needed come at a price. Somewhere in the range of 2.5 – 5 V/uS is ideal for a high output headphone amp like the O2.

- Feedback & Speed Myths – First of all, some audiophiles are under the mistaken impression that negative feedback (NFB) is mostly a bad thing. I just read, in an audiophile magazine, that NFB can only work correctly if an audio amp has zero delay. And they claimed such amplifiers don’t exist so the next best thing is either zero feedback or an extremely fast amplifier. The amp being discussed was touted as good for “250 Mhz” (yeah right!) resulting in “essentially zero delay”. This is a huge pile of false technobabble. The whole idea of “by the time a feedback loop corrects an error, it’s too late” is just layperson nonsense. Look up what Bruno Putzeys and Doug Self have written on the topic if you want the real truth. To use a layperson’s analogy, nearly any DC motor driving a turntable is controlled with a feedback loop. The turntable has rather massive delays with respect to current inputs to the motor vs speed due to the mass of the platter. The feedback pundits argue by the time the feedback detects an error, the speed is already wrong and can’t be corrected due to the delays. But, somehow against all those supposed audiophile gurus, the speed is rock steady and the feedback works perfectly. The same is true in an amplifier. The only speed issue that matters is the amp should never be slew rate limited. As long as that condition is satisfied, there’s no such thing as “Transient Intermodulation Distortion” or other such feedback related problems. Those are all dinosaurs from the era before fast power transistors were available for high power amps. It’s a non-issue at headphone voltages and below even using $0.39 parts like the NJM2068. Really.

CMRR – Common Mode Rejection Ratio is the ability of an op amp to reject input signals that are in-phase with respect to ground. This matters most when using an op amp in differential mode but it can help lower distortion in many single-ended circuits. For example, with the popular voltage follower op amp “buffer” shown to the right, the distortion will rise with input impedance in proportion to the op amp’s CMRR. In other words, if you stick a volume control in front of a buffer, and turn down the volume, an op amp with better CMRR may well outperform one with lesser CMRR. The close quarters inside a small portable amp results in more electromagnetic coupling of high current signals throughout the circuitry. Here again, higher CMRR helps the critical gain stage reject what otherwise might become distortion. For this application at least 80 dB worst case and 100+ dB typical is desirable. It’s also worth noting CMRR is often asymmetrical which is only sometimes disclosed on datasheets

PSRR – Power Supply Rejection Ratio is the ability of an op amp to reject common mode signals on the power supply rails such as ripple and distorted versions of the music signal. The grain of truth behind the common audiophile desire for massive overkill power supplies is they can make a difference but only when the audio circuit has insufficient PSRR. And a lot of audiophile designs have poor PSRR as they use discrete circuits or op amps not designed for audio. The OPA690 in the Mini3, for example, has poor PSRR for audio use. But if you simply use op amps with excellent PSRR, any variations on the power supply end up well below the noise floor of the entire design even with an inexpensive power supply. It’s a much more elegant and rational solution than a power supply with 50 times the required current capability. Like CMRR, PSRR can by asymmetrical.

- Turn On/Off Characteristics – Unless there’s an output relay to protect the headphones it’s important the op amp be reasonably well behaved on power up and power down. Not all are. And it’s often not covered in the datasheets so you just have to test it with a real scope at a slow sweep speed (most AC coupled soundcard scopes and RMAA won’t work). Don’t try to subjectively judge the “thump” in a pair of headphones as it’s hard to hear large amounts of DC. And a DMM is nearly useless due to their very slow update rate (they’ll miss the true peak of the transient by a mile).

- Drive Capability – To keep Johnson noise low, the op amp should have low distortion into a 1K load. A lot of op amps fail here—especially low power versions. This can be hard to figure out from many datasheets. Some of the better audio op amps are specified into 600 ohms. If you only see loads of 5K or higher, the part probably isn’t suitable for high-end audio. Ideally look for a THD graph at 2K or less with some gain.

Low THD – This is also often poorly specified. And if it’s not specified at all, or given as x dB for specific harmonics, you’re probably looking at the wrong op amp for an audio application. Ideally you want to know the THD at various gains, versus frequency, into various loads. In practice, this often requires testing with an audio analyzer. If the entire amp is to meet a 0.01% THD goal, the gain stage needs to be well under that.

Low Noise – Op amp noise is modeled two ways--current noise and voltage noise. The O2 is a low impedance application and the current noise will likely dominate but both have to be considered along with the Johnson noise. The math isn’t trivial. You can’t just compare the raw datasheet numbers and know how the amp will really perform in a specific application unless both the voltage noise and current noise are lower than another op amp. And even then Johnson noise could still dominate so both would perform roughly the same. And, in reality, the voltage and current specs tend to be mutually exclusive—i.e. amps with the lowest voltage noise usually have higher current noise and vice versa.

- Phase Reversal – Some op amps, when their inputs are overloaded, violently slam into the opposite supply rail. And sometimes that nasty fact isn’t even on the datasheet or is buried in a foot note somewhere. Phase reversal is unacceptable in this application.

- Rail-to-Rail – This isn’t required for the O2, but would be nice—especially when running from batteries. The problem is most rail-to-rail audio op amps are newer designs and often only come in surface mount packages and/or can’t handle a 24 volt supply. And the few that exist and meet the other requirements tend to be expensive. Even non-rail-to-rail op amps vary widely in how close they can get to the rails with various loads and operating voltages. The datasheets usually have a graph or two showing voltage swing versus load resistance and/or current output. It’s rarely worth using an op amp that’s otherwise poorly suited to high quality audio just because it’s rail-to-rail.

DC Offset Voltage & Bias Current – This usually isn’t an issue in an AC coupled application like the O2 but can very be a serious issue in a DC coupled application not using a servo or a high-pass filter in the feedback loop. The higher the gain the more it can matter.

- Short Circuit Protection – Any op amp driving an external output (line outs, headphone jacks, etc.) should have short circuit protection unless you can add enough series resistance without compromising the performance (which is often impossible). Beware even with short circuit protection it might be short term if the device does not also have thermal protection. The datasheets are usually fairly clear on this. There’s also the chance of exceeding the Safe Operating Area (SOA) of the output stage at higher supply voltages. Some designs may require some series output resistance to meet all these requirements. There can be trade offs involved. Enclosing short circuit protection in the feedback loop, as done in the AMB Mini3, can create stability issues and increase distortion.

Equivalent Schematics – Some manufactures publish so called “equivalent schematics” for their op amps. The implication is it’s a fairly accurate representation of how they actually made the part. In reality, however, it’s often not as they don’t want to help their competitors out too much. The schematics have become rather generic so as not to give too much away. So don’t try to take them literally. There’s often a lot more going on.

- Unique Advantages – For those who prefer discrete designs over op amps, I refer you to Q9 in the small snippet to the above right. Op amp designers are free to optimize every single transistor in the device including creating things you can’t buy off the shelf—like transistors with 3 collectors. This, combined with the inherent matching of transistors made on a single die, and near perfect thermal tracking, provides a bag of tricks no discrete designer can match. Sure there are advantages both ways, but the op amp guys have an awful lot at their disposal—particularly compared to some guy working in his basement.

COMPENSATION: Unity gain stable op amps, as being evaluated here, use dominant pole compensation for stability. This is to make sure that the amp does not oscillate when feedback is applied. In a low gain application, the amp might still show ringing on square waves due to the phase margin becoming small. This is usually compensated for by adding the correct value of capacitance in parallel with the feedback resistors from output to the negative input. These capacitors cause a zero and a higher frequency pole in the feedback loop response effectively cancelling one of the amp's own non-dominant high frequency poles (by placing a zero near it) and replacing it with a new one at a higher frequency. This results in an increased phase margin, improved stability, and improved transient response. It’s important to note this compensation isn’t “one size fits all”. It’s specific to many variables including the gain, the op amp’s own loop response, the impedances and reactive components in the circuit, etc. Like many things in audio it can be a delicate compromise say in an output stage with unknown external loads, or in a gain stage with two different gain options.

THE ELITE FEW: Designer op amps are amazingly like hyper-expensive supercars. They’re vastly more expensive than their more mainstream counterparts, relatively high strung, and very high maintenance. While they may show their true colors in critical applications, like say inside a $20,000 audio analyzer, it’s hard to imagine where they offer any real-world benefit inside a headphone amp. It’s analogous to taking your half a million dollar Ferrari down to the Quick-E-Mart for a corndog. The only reason not to take a perfectly competent, and more comfortable, BMW instead is so you can be seen in your Ferrari. It’s not like the Ferrari will get you through urban traffic any quicker and you’re more likely to mow down some kid on a bicycle because of the poor visibility out of that low slung bodywork. It turns out the following op amps find their way into headphone products for exactly the same reason—to be seen. It has very little to do with better real world performance or a genuine difference in sound quality. Here are some of the elite:

- OPA627 – The Burr Brown/TI OPA627 might be the Bugatti Veyron of op amps as both offer some of the best performance numbers around. But, just like it’s pretty much impossible to explore the Veyron’s full performance anywhere but on a race track, the same is true with the OPA627. Ferdinand Piëch was playing corporate one-upmanship with the Veyron, and Burr Brown did the same with the OPA627. Basically it’s the op amp of choice for those who keep their towels on in the gym locker room. In exchange for your $24, TI gives you a stereo pair of op amps so quick they can easily go sideways. Unlike the Veyron, there’s no built-in stability control—that’s up to the designer to get right. With a slew rate of 55 V/uS, the OPA627 demands very careful attention to PC board layout, power supply bypassing, parasitic inductances and capacitances, and reactive loads on the output. Basically if you’re only going to drive on public roads in traffic--i.e. headphone audio applications--it’s expensive overkill and more trouble than it’s worth.

- AD797 – The Analog Devices AD797 is probably the OPA627’s closest competition. There’s no question it’s a good part, but it’s also over $10 for a single op amp per package. If anything, due to it’s more rational speed (slew rate = 20 V/uS), and better CMRR/PSRR at power supply ripple frequencies, it’s probably the better part for audio applications. But this game is all about bragging rights. So, sadly, it often gets pushed aside in favor of the more trendy and excessive OPA627.

- OPA604 – The Burr Brown/TI OPA604 is also designed for audio use and, unlike the 627 above, even comes in a dual version. But I’ve never bothered with it after Doug Self extensively tested it and concluded: “it is not very clear under what circumstances this op-amp would be a good choice”. He found the much less expensive 5532 outperformed it in most every way.

- *AD8610 – Analog Devices lists “high performance audio” among the applications for the AD8610/AD8620 and, at around $10 each for the single part and $17 for the dual part, they aren’t cheap either. And, like the choices above, they’re overkill for headphone amp applications. I tested this part in the QRV09 and, while it’s very respectable, I suspect the NE5532 would match it to the limits of most audio analyzers.

HIGH PERFORMANCE: If the above are the ultra expensive supercar elite, these are the more attainable high performance cars. They’re still expensive and demand extra maintenance but they’re more sanely priced and closer to offering performance you can actually use now and then:

- *LM4562/LME49860/LME49720 – National had a dedicated team of high-end audio engineers and they turned out some really great parts. The most popular are these op amps which have found their way into a lot of high-end audiophile blessed gear. The LM4562 and its siblings, in any headphone or DAC application I can imagine, should match any of the elite parts above. That’s objectively on an audio analyzer using the conventional suite of audio tests and subjectively in blind listening tests. The LM4562 was the first op amp to unseat the NE5532 as Doug Self’s overall benchmark. The LM4562 is electrically identical to the LME49720 but cheaper ($3) and comes in a DIY friendly DIP8 package. Samuel Groner did raise some common mode concerns with the LM4562 depending on how it’s configured. Doug Self, who also tested it in some similar configurations, seems less concerned.

- *OPA2134/OPA134 – The Burr Brown dual FET OPA2134, and the OPA134 single version, are the BMW 325 sedan of audiophile op amps. You see lots of them around, they’re not exactly cheap, and they offer better than decent performance. I’ve included this part in my comparison mainly because it’s so popular. They’re $3 – $4 each for the dual part. Personally, for the price, I’d much rather have the LM4562 which outperforms it overall. I had to laugh when I read Doug Self’s critique of this part. Commenting on Burr Brown’s claims of “superior sound quality” Self said, “regrettably, but not surprisingly, no evidence is given to back up this assertion.”

- *OPA2227/OPA227 – This OPA2227 is a more expensive Burr Brown bipolar op amp that offers lower noise specs and some other improvements over the 2134 above. This part is considered an upgrade over the popular OP-27 and LT1007 so I’ve included it in my tests. It has lower noise than the LM4562 but little else to recommend it in typical headphone/DAC applications.

- OP275 – The Analog Devices OP275 part is another part Doug Self rated a solid FAIL. His summary: “it is probably best avoided.”

- Linear Technology – Linear Technology makes lots of op amps with typically each having an impressive spec or two but they don’t seem to have the sort of home run all-rounders to match something like the LM4562. Samuel Groner tested several LT parts with mixed results. He was rather unimpressed with the LT1007 for example as it had obvious crossover distortion and other flaws.

GIANT KILLER: The following op amp deserves its own category:

- *NE5532 – The NE5532, as mentioned in the last article, was originally developed by Signetics mainly for audio use. At the time it, for audio applications, it was like comparing a modern day Ferrari to an old Volkswagen Beetle. It really wasn’t until the LM4562 (see above) came along there was much reason to use anything else for most consumer audio applications—especially if you exclude microphone and phono preamps. There are op amps with better DC specs, faster settling times, higher slew rates, lower offsets, etc. But none of those improvements help 99% of audio gear perform any better. And those “partly better” parts are often worse in more critical areas. The 5532 is the corner stone of nearly all high-end professional audio gear which means most of your music has already been well steeped in 5532 goodness. If you dislike the “sound” of 5532 that means you must also dislike most of the music available.

BARGAINS: When price matters, you can get amazingly good performance if you know where to shop. The best deals I’ve found are from NJR/JRC. For more on NJR see the Circuit Description section of the O2 Details Article:

- *NJM2068 – You’ll find the NJM2068 in a fair amount of pro audio gear—some of it with big names on the outside. I haven’t seen it as much in consumer electronics but it does show up now and then. It has significantly better noise performance than even the NE5532, LM4562 and far better than the OPA2134. It’s $0.39 and requires about a third less power than most of its competitors. It can’t drive as low of impedance loads, and can’t quite match the 5532’s high frequency distortion at higher gains, but it comes so close in most applications it’s essentially a tie.

- *NJM4562 – How about a $3 LM4562 (see above) for $1? That’s pretty much what you get with the NJM4562. My measurements show the two to be very similar with the NJM version even needing less quiescent current. It has slightly more noise than the 2068 above but can better drive low impedance loads, with lower high frequency distortion, at higher gains. For gains < 4X into “easy” (non-reactive) loads of 2K or higher, the NJM2068 is the better part. If you’d rather have the Audi A3 over the very similar but much cheaper Volkswagen GTI, get the National part, otherwise save some money with the NJM4562.

HIGH CURRENT: For driving low impedance and/or significantly reactive loads, there are not many choices in dual DIP8 packages that work comfortably at +/- 12 Volts (24 volts total). The short list:

- *NJM4556 – If you need lots of drive capability the NJM4556 can beat every dual DIP8 op amp I know of. This part is discussed more in the O2 Design Process article.

- *RC4580/NJM4580 – The 4580 isn’t a bad part but, in my tests, it’s inferior to the NJM4556 in pretty much every way. Most significantly it gives up up at around 50 mA while the 4556 can manage double that. And they’re only about $0.20 cheaper.

DON’T BOTHER: While the following parts had their glory days, they’re long gone. They offer no advantage over the above parts and are often much worse:

- *TL071/TL072 – These were mainstream audio parts and used in lots of pro gear before the 5532 came along. For all those speed freaks, it’s worth noting they’re rated only slightly slower than the elite chips at 15 V/uS. They were also among the first reasonably priced FET op amps that were useful for high quality audio. But they suffer from very limited output current, they can’t get very close to the rails, have higher distortion, and they’re relatively noisy. Their lower power cousins the TL061/TL062, and the lower spec TL081/TL082 are even worse.

- LM833 – This was one a National’s early attempts at a high performance audio op amp like the 5532 but they failed to beat the 5532.

- 4560 – The 4560 has been eclipsed by the NJM2068, 5532, 4556, etc.

- 4558 – Same as above

- Most Any CMOS Op Amp – There are lots of CMOS op amps (such as the TI TLC and TLV series) with various marketing claims being made. They’re great for lots of non-audio applications. But I’ve not seen any that can even come close to the $0.39 NJM2068 for serious audio performance into similar loads, etc. Unless you have a specific reason (such as a strict low power/low voltage requirement) to use one there are generally better choices

MEASUREMENTS

WHICH PARTS: To avoid spending 100+ hours on this, I’m only publishing the cream of the crop. Overall I tested nearly two dozen op amps. But it quickly became apparent that many were either obviously inferior, or in this application, approached the limits of the dScope which means they were way past being “good enough”. So I then narrowed the choices down further based on price, popularity, and power consumption. For the output stage it was no contest, the NJM4556 killed everything else. For the gain stage the final list worked out to:

- NJM2068 – Cheap and Cheerful

- NE5532 – The reasonably priced objective benchmark

- OPA2134 – A mainstream audiophile favorite

- OPA2227 – Pushing the high-end of the budget with excellent specs

- OPA2277 – For the low power O2 (long battery life version)

- LM4562 – An audiophile favorite (NJM4562 stocked at Mouser)

- TL072 – A former audio benchmark to put the above in perspective

ALL THE SAME: Here’s a list of measurements that were essentially unchanged by any of the op amps and why:

- Frequency Response – The 220 pF feedback cap determines the upper –3 dB point (~250 Khz) with all the op amps and the low frequency limit of just the gain stage was down to DC. There were no measureable differences. The only thing that would cause a problem would be high frequency instability might cause a rising HF response. But I checked for that where applicable.

- Slew Rate – All were over the 2.8 V/uS requirement for the O2. Put another way, all were over the requirement for any dynamic headphone amp.

- Square Wave/Stability – Stability issues showed up in the THD vs Frequency sweeps as added high frequency distortion or worse. So I did check the square wave response for any op amps showing unexpected behavior. None of those op amps are shown below (i.e. everything measured below was stable as far as I know).

- Phase – This is set by the 220 pF feedback compensation capacitor to less than 1 degree error at 10 Khz with all the op amps.

- Crosstalk – The internal crosstalk in any of the parts I tested was lower (better than –100 dB) than the rest of the circuit (such as the volume control and headphone jack) so it wasn’t a significant factor.

- THD 20 hz & 20 Khz – These are mostly covered by the THD vs Frequency sweeps.

BEING LAZY: I probably should have tested these, but didn’t:

- Clipping Performance – I probably should have verified the clipping behavior on a scope, but I didn’t. The good news is the THD vs Output sweep tends to show red flags for any weird clipping behavior and all op amps were driven to clipping in that test.

- SMPTE & CCIF IMD – IMD testing might have revealed some minor differences. But the few I checked were so similar I didn’t bother going further. I suspect only the TL072 would have stood out.

- DC Offset – It doesn’t matter in the O2 because the gain stage is AC coupled to the output stage so it wasn’t worth measuring. The datasheets should yield accurate numbers for those interested.

- Distortion Magnifier – It would be interesting to use a distortion magnifier to increase the resolution of the dScope distortion measurements by an order of magnitude to reveal more differences. I have one, but it’s a lot more work to use than the dScope’s native hardware. I’m really not that concerned with distortion in the “triple zero” range given that it’s at least ten times below the most conservative estimates as to what’s audible (0.01%). The dScope reads as low as 0.0005% on its own. I’m not trying to count DNA strings in an electron microscope. I just want to listen to music!

dSCOPE RESIDUAL THD+N: Several things are important to know about the dScope. First these tests are using its Continuous Time hardware analyzer which computes the THD+N in real time. This dramatically speeds up sweeps with 100 points for each pass but it also limits the accuracy and ultimate noise floor compared to using FFT post-processing. The white line in the two graphs below is the residual noise floor of the dScope in loopback and the THD+N is as low as 0.0005% which is plenty low enough for these measurements. But, op amps have become so good they can easily approach or even better that. Keep in mind that 0.0005% is the combined distortion of the signal generator, output amplifier, all the balanced isolated input circuitry (which can handle anything from a few microvolts up to 200 volts RMS!), and the 24/192 A/D in the dScope. So there’s a lot more going on in the dScope than just a single op amp so 0.0005%, viewed in that light, is rather impressive.

DROOPING HF DISTORTION: It’s somewhat debated, but the generally accepted practice limits THD+N measurements to the audio band. This has especially been true in the “digital age” where there can be significant out of band noise from digital circuitry, switching power supplies, etc. If you include the out of band stuff, the entire noise floor rises and masks the true audio performance below 20 Khz. So I’ve cut all but one of the sweeps off at 22 Khz which is the default standard in the dScope. The distortion drops as the harmonics fall above this frequency. For example the third harmonic of 8 Khz is 24 Khz and would be rejected by the analyzer.

JAGGED LINES & LEGENDS: The jagged bits in the traces are mostly the input circuitry auto-ranging to keep the signal within 2 dB of the dScope ADC’s 0 dBFS point. This maximizes the dynamic range of the analyzer but causes some “steps” in the sweep when the relays switch different attenuators into the circuit. Audio Precision analyzers do the same thing (unless you average it out with lots of smoothing). Finally the “A” in the legend names is “Channel A” of the analyzer and it’s added automatically. It’s not my usual poor grammar.

THD+N vs OUTPUT: The most interesting thing about this result is how boring it is. Note: This is just the gain stage. The dScope was connected directly to the output pin of the op amp being tested (the NJM4556 output stage is not included). The default NJM2068 is shown at gains of both 2.5X (yellow) and 7X (aqua) while the others are shown at only 7X. The OPA2134 (blue) and the NE5532 (pink) get slightly closer to the rails before they clip but otherwise deliver very similar performance—all 3 parts are so close to the analyzer’s residual it’s mostly a tie. I didn’t even include the OPA2227 or LM4562 as the lines were right on top of the NE5532. The TL072 (orange), however is a different story. The TL072 clearly isn’t liking the ~1.3K load. Note the graph has been re-scaled from my usual 1% THD to only 0.1% at the top. The DC rails are +/- 11.85 V:

LOW POWER OPA2277 THD+N vs OUTPUT: Here’s the low power op amp I’m recommending for the O2 low power option (25+ hour battery life) at both gain settings compared to the standard NJM2068. While the clip points differ by about 10% the distortion performance is very similar. The OPA2277 needs only 0.8 mA per amp or 1.6 mA total vs about 4.5 mA total for the NJM2068. The big downside is it’s more than ten times the cost ($4.40). It’s the best performing low power part I found, many others like the On Semi MC33078, TL062, NJM022, etc. are seriously awful:

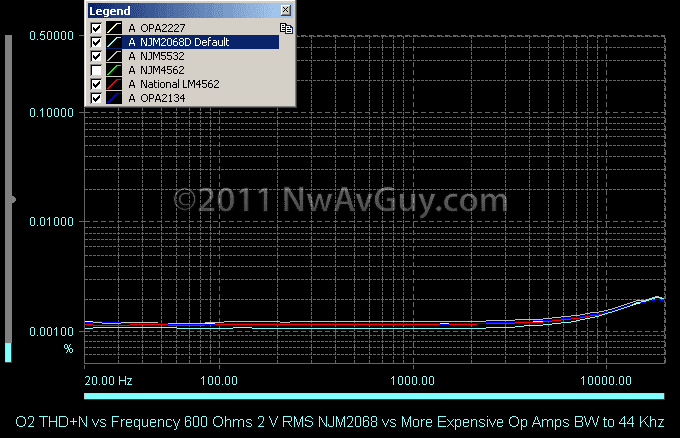

THD+N vs FREQUENCY: Here are the favorites plotted vs frequency also taking the output directly from the gain stage op amp under test. Again, I didn’t bother with the expensive OPA2227 or LM4562 as they just further blurred everything together with the residual of the analyzer. At 2.5X gain the NJM2068 matches everything else. At 7X gain (aqua), however, it’s not quite as happy with the ~1.3K load and starts to show the limitations of a $0.39 part. However, to put it’s 7X gain 0.0018% max THD+N in perspective, consider that’s 28 times lower than the 0.05% high frequency requirement. Even if you use the much more stringent –80 dB threshold of 0.01% it’s still more than 5 times lower. So it’s extremely unlikely to be an audible problem. And, perhaps more important, the NJM2068 uses significantly less power and has significantly less noise. The TL072, on the other hand, is obviously having a hard time as it did in the earlier sweep:

LOW POWER OPA2277 THD+N vs FREQUENCY: Here’s the recommended low power op amp versus the default NJM2068. At 2.5X gain the OPA2277 is still under 0.002% while at 7X gain it hits 0.0045% which is still well under the 0.05% high frequency goal and even comfortably under 0.01%. This is a worthwhile trade off for needing only about 1/3 the power:

OTHER TESTS: Here the dScope bandwidth extends to 44 Khz testing the older V1.0 O2 board at 3X gain.The measurement is at the headphone output into 600 ohms at full volume. The important thing to note is even the relatively high-end LM4562 and OPA2227 don’t make any difference:

dSCOPE RESIDUAL NOISE: Here’s the noise floor of the dScope in dBV (the unit dBV is always referenced to 1 Volt RMS) in loop back with the output of the generator muted and set for a 25 ohm source impedance. To reference these numbers to the full output of the O2 (7 V RMS) you would add 17 dB so the A-Weighted number would be 137 dBr (ref 7V). Also note the noise floor is well below –150 dB except close to 20 hz (which is actually an artifact). The total sum of the noise out to 22 Khz is how the –116.8 dB number is calculated:

NJM2068DD NOISE: The default op amp is impressively quiet even at 7X gain as measured here—especially for $0.39! Being a bipolar input device designed for audio and low noise I would expect decent performance but this is borderline stunning matching even the nearly $5 OPA2227 below:

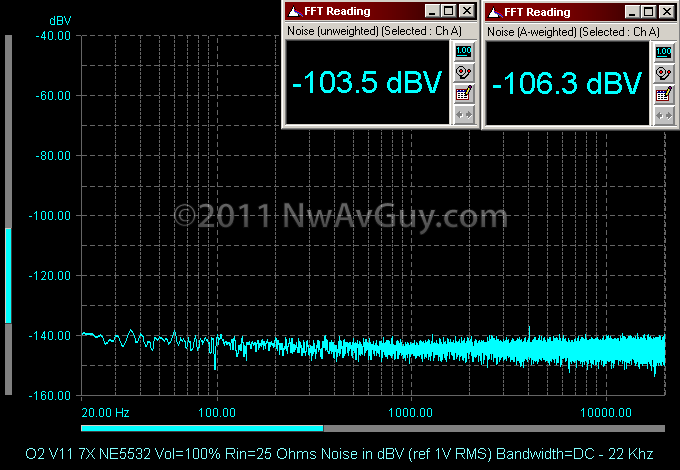

NE5532 NOISE: The professional audio favorite, the NE5532, is about 3 dB worse at 7X gain than the less expensive and less current hungry NJM2068 above but still very quiet. I have heard some versions of the 5532 are a few dB better than others. This was a TI NE5532AP:

LOW POWER OPA2277 NOISE: This is the recommended op amp for the low power version of the O2. As is often the case with lower power op amps, despite it’s $4+ price, it has roughly 6 dB more noise than the $0.39 NJM2068 but it’s not too bad all things considered and matches the popular OPA2134. Most of the other low power parts I tested were much worse:

OPA134/OPA2134 NOISE: Op amps with FET inputs are typically noisier in many audio applications. And despite the Burr Brown OPA134/2134 being marketed as “low noise” it’s has nearly 3 dB more noise than the much cheaper NE5532 and nearly 6 dB more noise than the cheaper still NJM part. The –130 dB spike at 60 hz might be related the high impedance FET inputs or it could just be changes in the AC field around my test bench but it’s not significantly affecting the numbers. You can plainly see the entire noise floor is about 3 dB higher than the graph above:

OPA227/2227 NOISE: This is the most expensive op amp at nearly $5 and, for 12 times the cost of the NJM2068, it only manages to match its noise performance! This is a bipolar input device specifically designed for low noise applications in professional audio equipment. In the O2 this would be a complete waste of nearly $5:

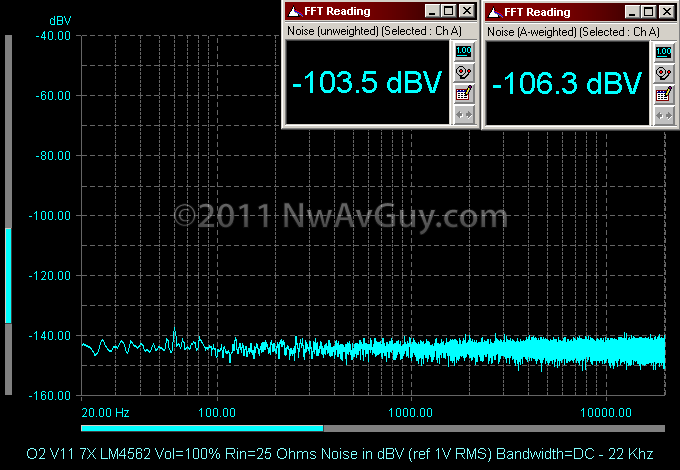

LM4562 NOISE: While the National LM4562 isn’t available from Mouser, the NJM4562 is and they perform very similarly. Here’s the genuine National part and it’s noise performance turns out to to be nearly identical to the NE5532:

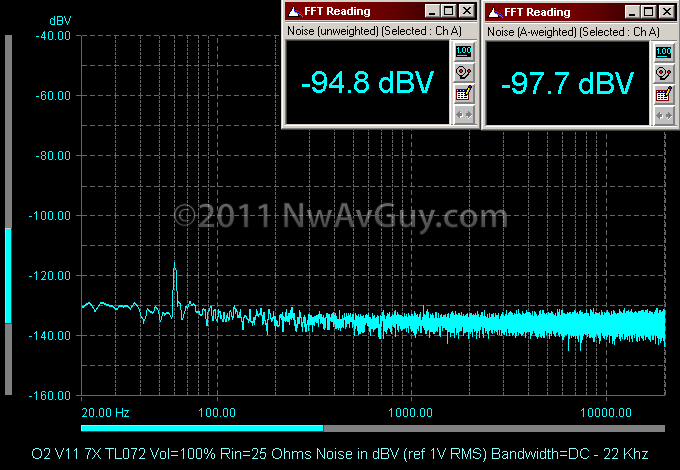

TL072 NOISE: The TL072’s FET inputs and ancient design really show here. It’s nearly 12 dB worse than the NJM part which is fairly huge—especially considering the TL072 is more expensive (about $0.60). The 60 hz spike is back which has me wondering if FET inputs and EMI susceptibility go together (see the FET OPA2134 above):

MEASUREMENT SUMMARY: While there are some differences, if you exclude the ancient TL072, everything else delivers very similar distortion performance, especially at more modest gains. There are perhaps more significant differences in noise performance and that’s where the NJM2068 excels. The NJM2068 might have slightly more high frequency distortion at 7X gain, but it also has 3 dB lower noise and uses 1/3 less power than the NE5532. The overwhelming conclusion is you can spend less than $1 to get performance well beyond the point of diminishing returns. Spending more doesn’t buy you anything useful in this typical application. Hopefully this article at least helps demonstrate there are not big differences between appropriate op amps in a well designed circuit. If you had a headphone amp with an NE5532, and tried to “upgrade it” to an OPA2134, you would only end up with about 3 dB more noise for your trouble.

Wednesdays can't come fast enough! Thanks for making my afternoon at the office pass by a little quicker.

ReplyDeleteHow often do you suspect the higher end op amp being forced to work under less than optimal conditions creates an audible difference? I tend to believe placebo plays a bigger role, but it's an interesting prospect.

Yep, I think this article is more my style than the last one. Great article again!

ReplyDeleteOne question: you mentioned 24/96 slew rates and then implied that SACD would require a higher slew rate than that... why is that?

Thanks b0ck3n, I didn't make Wednesday even in my time zone this time. The article was another late night "rush job".

ReplyDeleteIn a Cmoy, or other application, where the op amp is driving headphones directly nearly all of them are working under stressful conditions. I've also seen examples of where someone removes a relatively slow op amp from a piece of gear and replaces it with a much faster op amp thinking it's an upgrade. But the new fast part is unhappy in a PC board and circuit designed for a much slower part.

The result might have poor transient response as it's now under-compensated. Or it might be borderline unstable with ultrasonic ringing or even oscillation. Any of those can make it sound different.

And subjective bias being what it is, many who just got done de-soldering a $0.50 part and replacing it with a $5+ part will assume what they're hearing must be better sound as the new op is supposedly so much better. They think: "Ah! That's how my gear is supposed to sound if the manufacture hadn't used that cheap crappy op amp!" and they run off to their PC and give the swap a rave review on Head-Fi assuring others propagate the myth and degrade their gear in the same way.

That's what happens when people who don't fully understand the engineering start messing with parts that have response out into the tens or hundreds of Megahertz. They have no way to test they got it right. Even RMAA can't do it as soundcards are blind to most of the frequencies where the instabilities show up. Not to mention few know how to even use RMAA properly.

Evaluating only by ear, when they hear differences, they're strongly biased to perceive them as improvements. And, as you say, even when there are not differences, the placebo effect usually assures people still hear a difference.

SpaceTimeMorph, 24/96 can only reproduce a signal up to about 45 Khz (48 in theory). A DSD SACD, in theory, can reproduce a 100 Khz signal although there seems to be debate on if that's actually even possible with any real world SACD player. But, at least on paper, DSD was designed to compete with 24/192 which cuts off around 90 Khz.

ReplyDeleteWho needs that when you can get the TakeT super-tweeter? ... *shrug*

ReplyDeleteOK, at least we know you don't work for TI, National or AD :-)

ReplyDeleteIt is unfortunate that for the longest time seeing a 5532 opamp it some audio gear, it would automatically devalue it.

Perhaps the dip requirement should be "lifted". I am not very good at soldering but if the carrier board has long traces, one can heat the traces (instead of the pin) and easily solder an SOIC device

Thanks for another great article

Another nice write-up!

ReplyDeleteI'm a big fan of Samuel Groner and Daniel Weiss' work. I'm not saying their units sound any better than any number of other DACs that measure well beyond the "it matters" point, but their heroic engineering and Swiss precision are worth the price in the same sense that a Patek justifies a premium over a Rolex.

I'll kick in some bux for a blind test reward if you can get His Royal High-ness from AMB to balls-up and agree to one... :-)

I guarantee, you'll have no takers - I offered a huge amount to one of the hoity-toity audio snob magazines some time ago, they get the money if they pass, I get to just publish the results if they didn't. Still silent.

The million dollar speaker cable challenge shows best that the audio emperors have no clothes.

Rob

Throw some clipping tests especially at 10khz, 20khz, and 45khz with a capacitive+resistive load attached.

ReplyDeleteAnother stringiest test would be configure the opamp into highpass or lowpass sallen & key filter with gain 3 and then over drive the inputs and watch for phase reversal at outputs. NE5532 will excel here.

Will you be able to talk a little more about the alternative buffer opamps? I am especially interested about the performance of OPA551 and TLE2062.

ReplyDeleteIf stability with OPA551 is an issue, one idea is to wrap the output resistor inside the feedback loop to reduce output resistance, fa-schmidt at headwize reported good results using a 47 Ohm resistor, however I am not too sure how the math translates into the actual output impedance seen by the headphones.

Thanks Heycarnut! I'd love to have Mr AMB step up to a blind test! But, the fact is, he won't even post in the official O2 threads where there can be an open discussion. So it doesn't seem likely he would ever agree to put any of his amps on the line where they could be judged fairly in a blind test even by his own ears.

ReplyDeleteAnon - I talk about both the OPA551 and TLE2062 in the O2 Design Process article. The TLE2062 is the op amp I chose for the long battery life version of the O2.

The OPA551 is harder to stabilize without using series resistance or an output inductor. And, perhaps even more significantly, it doesn't offer much benefit over paralleling the much cheaper and more stable NJM4556 when used as a buffer.

Putting a resistor in the feedback loop actually makes stability worse than no resistor at all. With reactive loads, the voltage drop across the resistor will be out of phase with the output of the amplifier. That creates unwelcome phase shift in the feedback signal. And that's all that separates an amplifier from an oscillator. It's generally poor engineering practice unless the resistor is absolutely required for some other reason or only pure resistive loads are used (which rules out nearly all headphones).

The resistor also tends to increase distortion into low impedance loads as it's creating relatively massive feedback error signals under those conditions.

AMB tried the resistor-in-the-feedback-loop trick with the Mini3 and the high-strung AD8397. And the Mini3 is borderline unstable as can be seen with the 4 Mhz ringing in my scope captures. It also has very high distortion into 32 ohms--especially at high frequencies--although at least some of that is the OPA690 "third channel".

But, all that said, with enough time and patience it might still be possible to tame the OPA551 for headphone duty without any significant compromises. It looks great on paper, but ultimately, I'll put a paralleled NJM4556 up against it in a blind test any day. They both have the same power dissipation which is ultimately the limiting factor for power output. And the NJM4556 can deliver 200 mA of peak current (as shown in my O2 Headphone Amp article.

Its nice that you've put some effort into this and I found it quite interesting interesting.

ReplyDeleteUnfortunately, as you mentioned, these sorts of ideas never rest in peace no matter how much evidence stacks up against them. A lot of the people you'd argue about this sort of thing with will tell you that THD is a worthless spec anyway. Of course even if you measured everything else under the sun they'd still tell you either that something else mystical and unmeasurable was causing the differences they can only hear sighted or that the high-speed opamp they put in a circuit that makes it unstable is more actually "musical".

For the most part you're doing a thankless job but I'd like to chime in and give you at least one thanks for another interesting article.

Thanks Maverick. The "specs don't matter" crowd is why I put the $500 challenge in this article. And apparently heycarnut is willing to raise the prize money even further.

ReplyDeleteAnyone who argues the specs don't matter is welcome to step up and take the challenge with their own ears, their own headphones, and their own music. I mean, according to all the stuff they post with such definitive authority, how hard can it be to hear the difference between say the $0.69 NE5532 and a $24 pair of OPA627s?

NwAvGuy - Love your articles =) huge fan!

ReplyDeleteHave you considered testing several of the same op amp to see production irregularities? I had a tube of 100 ne4556 and half of them had high dc offset compared to the rest.

ReplyDeleteAnon, I don't know anything about the NE4556, which as far as I know, isn't even available. Was that an old Signetics part? And you seriously hand tested 100 of them? Also, only 50 fit in a tube.

ReplyDeleteI have tested a total of a dozen NJM4556 ICs from five different date codes and all are under 4 mV offset at unity gain (which is how they're used in the O2). They've been very consistent in every other way as well.

Grado used the NJM4556 in their nearly $350 RA-1 headphone amp and I've not heard of any DC offset problems with it.

*** Grado used the NJM4556 in their nearly $350 RA-1 headphone amp ***

ReplyDeleteIIRC that amp was "outed" a few years ago.

What does that have to do with offset problems? My point was I've not seen any evidence, even in a mass produced product using the NJM4556, that it's a problem.

ReplyDeleteAaah, I didn't know about PSRR. It (well, it's rejection by some groups of people) explains a lot, thanks!

ReplyDeleteYou can partly blame the Difet process for the OPA627's high price. The main advantage of the process is low input bias current (see OPA129) which isn't really a huge concern in this application. How the OPA627 became a designer opamp for audio applications is a mystery to me. Luckily process advancements have provided alternatives to the Difet process and there are now opamps with comparable input bias specs at a fraction of the cost (see OPA140).

ReplyDeleteIt would have been nice to see how the OPA1612 and OPA1642 measure up but they only come in surface mount packages. Gotta love those SOIC to DIP adapters!

I will confess to being quite interested to see how well the OPA627 performs vs the other OpAmps in the article...

ReplyDeleteBut according to your text the OPA627 should annihilate other OpAmps in a well implemented circuit, then?

Anon, while the OPA627 looks good on paper, what I tried to say was in most audio applications the OPA627 offers no meaningful advantages over even $1 op amps--no matter how well implemented.

ReplyDelete(Pardon the Anon status, I'm browsing over a public terminal and the usual cautiousness applies...)

ReplyDeleteI'm curious though, is this possibly why 'companies' like RSA file away the OpAmp markings on their products. More food for thought on my side, I guess...

Anticipating the probably upcoming O2DAC... ;)

Removing op amp part numbers creates "mystique", makes it harder for someone to clone your design and sell it on eBay, and may be to hide using inexpensive parts. In reality, in most headphone gear, there are lots of op amps that will perform nearly identically as long as they're used correctly. So it really doesn't matter much.

ReplyDeleteLook what I found, some more test data on opamp headphone driving capabilities (from back in 2005/2006, it seems).

ReplyDeleteA '072 expectedly sucks, as do all the 4558s. The difference between NE5532 and NJM5532 is interesting.

The 4556 gives the best result short of a dedicates headphone driver.

Thanks Stephan. I'm not surprised the NJM4556 came out on top. With 12 volt supply rails (as used in the O2) the 4580 beats the NE5532 which is also consistent with my measurements. After the TLE2062, the 4580 is my third choice for a DIP8 dual headphone op amp.

ReplyDeleteI never compared the NJM5532 to the NE5532 driving headphones. I only compared them in the O2's gain stage where they performed very similarly.

I can only disagree with one thing:

ReplyDeleteA Ferrari 458 Italia is way more raw, engaging, and fun to drive than any BMW, even an M3. I would rather drive a 458 to the supermarket.

Actually I'd rather drive a 911 GT3 to the supermarket but that's besides the point.

In my city, JRC4556 in only available in SMD package (Power dissipation 250mW against 700mW for a DIP). I’ve built a basic CMOYs (gain 4X & also 0 gain) with “real ground”. At +-15V to +-12 V the SMDs would get uncomfortably hot (with / without load), at +-9V SMDs get slightly warm(With 60 Ohms) but still would get hot if I use 32 Ohms or less h/p. My question is

ReplyDelete1. Can I get max. power output (comparable to DIP’s peak current capabilities 70mA.Operating voltage +-9V to +-12V) by using proper heatsink?

2. Does running them on +-5V has any disadvantages for h/p under 60 Ohms as this makes the SMDs work cooler.

+/- 5V would be OK if you don't need much voltage swing on the output. And running the two sections in parallel, as in the O2, doubles the dissipation but don't try to parallel even more op amps unless you use larger isolation resistors.

ReplyDeleteIt's hard to heat sink SOIC8 packages unless they're designed with some of their pins attached to the die directly internally which is unlikely with the 4556. So it all comes down to the load, type of music, and supply rails. The dissipation goes up with the square of the supply voltage. +/- 10V has four times the dissipation of +/- 5V.

If you haven't read it, there's a dissipation section in the O2 Details article Circuit Description that might provide some more info.

NwAvGuy, TYSM for putting together your blog. It has been a pure delight reading from beginning to end. All the H-F EE flunkies out there have nothing better to do but throw stones when they should be diligently improving their own inferior product(s). Don't worry about your detractors a bit. The Objective₂ will stand and shine on it's own merits.

ReplyDeleteSince I missed the boat on the GB so I am having to purchase boards from JDS, oh well. I am building several for friends and family and look forward to "un-hearing" them, lol. Soon, the Objective₂ will be "Unheard in the best places".

Ok, now a question. What are the effects of reducing the isolation resistors to .5Ω values? Do the 4556's really need 1Ω to keep from killing each other? I wonder because it seems that the .5Ω output impedance is simply half of the 1Ω value of the 4 resistors. The IEMs I mainly use are really 13Ω, not your measurement standard of 16 Ohms. Do you think that a 3Ω difference will be of any significance?

BTW, I pronounce your handle as 'EnWaveGuy' as I have seen several ask how you are supposed to say it. Cheers!

@FLAudioGuy - it's Northwest AV Guy but that's quite a mouthful. Sadly, all the decent .com domain names are long gone so one has to take what they can get. Thanks for the kind words.

ReplyDeleteYour post would be better in one of the O2 articles, but to answer your question I wouldn't lower the resistors and I don't think the 0.5 ohm output impedance of the O2 is a problem for your IEMs.

I do the math in the February output impedance article and ,as long as the output impedance is less than 1/8th the headphone impedance, life is good. So your IEMs need < 1.6 ohm output impedance and the O2 is already about 3 times better than that.

The reason you don't want to go smaller with the resistors is differences in DC offset voltage (which varies between samples of op amps) create a constant DC current between the op amps. That current, besides draining the batteries a bit faster, also shifts the DC bias point of the output stage in the 4556. If you shift it too far, the distortion goes up. I did lots of tests with different samples of the 4556 and different value resistors to arrive at the optimal 1 ohm value.

I think it's discussed a bit more O2 Details Circuit Description.

Great info! Thanks!

ReplyDeleteJust one question - some time ago I came across the NJM4580 in the DigiKey catalog, and the description said "noiseless". We know there is no such thing, so far. So, you did not mention testing the 4580 as a gain stage, but you did reject it as an output stage. Did you consider it as a gain stage?

Gotta love those noiseless op amps! My perpetual motion machine uses one. ;)

ReplyDeleteThe 4580 has higher noise and higher distortion compared to the NJM2068. So it's an inferior choice for a gain stage. It does, however, have vastly better low impedance drive capability which might be useful in some rare applications. But, generally, the 5532 or LM4562 would be a better choice for such applications if the higher cost is not an issue.

NwAvGuy,

ReplyDeleteThis blog is great. I remember reading the tangentsoft site where they compare op amps with "aggressive" or "laid back" sounds. WTF is that?

As a student of EE, I respect and welcome your objective contribution to the DIY audio community. I started reading about headphone amps with the intent to build my own. With the R&D put into the O2 and the testing done on it to prove the design, I'll probably pick up a PCB and build my own.

Thanks for bringing a grain of truth to the beach of BS.

I regards to Soundshui's question about supply voltage I also had to buy SMD JRC4556 parts from RS Components in UK. The package was even smaller than SOIC so I had to bend the legs to fit the carriers.

ReplyDeleteI put one in the headphone amp at +-8V in my CD player with 32ohm headphones. It plays plenty loud enough and gets only a little warm. I have another JRC4556 as the output driver running off +-12V and this gets warmer but not too bad. I put a JRC4556 in my preamp and used it to drive headphones through 1ohm resistors. The sound was unbelievably transparent (maybe that should be believably transparent). Unfortunately with +-18V rails the quiescent dissipation exceeded the 255mW and the light has now gone out on my preamp suggesting the device has expired and taken down the power supply. Looks like I will have to bite the bullet and get some DIPs from Mouser - I just did not want to pay 12GBP shipping on a few 0.50GBP parts - and fit some 9V regulators for safety.

Are you sure the dissipation goes up as the square of the voltage? I would have thought that most of the current goes through constant current sources (P=I*V) rather than resistors (P=I2R). The spec shows operating current only increases from 8mA at +-4V to 9mA at +-18V. Sorry to be picky especially as I made my temperature measurements with the tip of a pinky.

ReplyDelete@Steve, the dissipation you have to worry about in the 4556 isn't the quiescent current but in the output stage when the amp is driving headphones. I agree constant current sources keep the quiescent current relatively constant. But the "V*I" losses in the output stage are typically an order of magnitude, or more, greater and they do go up as the square of supply voltage.

ReplyDeleteThere's a section in the O2 Details article Circuit Description that talks about power dissipation. The easy way to measure it is to record the DC power being consumed and subtract the AC audio power being delivered to the load. The difference is all dissipated as heat.

Sorry to hear some are suffering with surface mount parts. Have you looked on eBay? The 4556, in DIP8, is often available there with very inexpensive shipping for just a few dollars.

I have now dropped the rails to +-9 and the 4556 is running much cooler now. With this driving the pre to power amp cables, the improvements at all frequencies over the OPA2134 are not subtle.

ReplyDeleteAre the LM4562 and the JRC4562 the same animal? The spec sheet says that the NMJ4562 is compensated for gains of 10 and above whereas the LM4562 is unity gain compensated? Does your amp design compensate for lower gains? I would also quite like JRC4562 for I-V conversion in place of an OPA275 (now OPA2134). If I order 4556s from Mouser then I could get a couple of these too.

You're correct, the JRC4562 is not a plug and play replacement for the LM4562. I should probably clarify that in my article. The JRC4562 is stable in the gain stage of the O2 at 2.5X gain due to the added compensation in the O2 design but I think the LM4562 is a better, and safer, option for only a dollar or two more.

DeleteIf you want a Mouser stocked "upgrade" for the O2 gain stage the OPA2227 is a proven option. Mouser doesn't sell National Semi op amps.

Sorry if this is a dumb question, but what about the LME49860? You said you tested it, but you didn't publish the results. You don't have to go to the trouble of publishing the results, but I just want to know how good is it compared to the mainstream LME49860? Is it a clear upgrade in every category, or is it better in some things and worse at others?

ReplyDeleteThe LME49860 is a higher voltage version of the LM4562. They perform similarly. Generally you should only use the LME49860 if you need a higher supply voltage than +/- 15V (30 volts).

DeleteGreat article NwAvGuy.

ReplyDeleteWhen it comes down to it which would you choose, the OPA2227 or LM4562? Yes, I just happen to have these two Op Amps in front of me.

Thanks!

Awesome work!

ReplyDeleteI just finished my O2 and I´m test driving it as I type...sweet sweet performance. Very happy! thank you for for developing this killer amp. Can´t wait for the ODAC!

I´m tempted to try a AD712 that I have on U1, would like to know your thoughts on the matter and how do you think this will work with the O2

Thanks!

I'm glad you like your O2 but I'm wondering if you read the article above and my Op Amp Myths article? Unless you have your O2 configured for freakishly high gain, the output op amps dominate the distortion in the amplifier. Using a more expensive op amp in the gain stage won't help anything. In fact, it may degrade the performance because the O2's transient response (compensation) has been optimized for the NJM2903.

DeleteOp Amp rolling is 99% myth. When real differences are heard between op amps it's because one or more of the op amps are being used incorrectly. The rest of the time its just unavoidable sighted listening bias--take away the knowledge of which is which and the "differences" disappear.

Hey there NwAvGuy. There's an old audiophile's tale (like a wive's tale) that OpAmps in a TO-99 package sound better than the same OpAmp in a DIP/SOIC package. The only theory I've heard to explain this is that the metal can package rejects RFI better. So what I want to know is:

ReplyDelete1.) Have measured any objective differences between TO-99 OpAmps and their equivalent in a DIP/SOIC package?

2.) Have you observed (heard) any differences between the different OpAmp packages? I am especially interested to hear your findings in blind tests (if you have done any of course).

This is slightly unrelated but I read your newest article (Apr. 1) and the ODAC sounds (err... measures) incredible. I bought an X-Fi Titanium HD to hold me over until the ODAC + O2 (or maybe the ODA) comes out.

Thanks for all that you are doing for the audiophile community, and take care. :-)

You might look up what's significant about April 1st... (if you missed that detail).

DeleteThere's the RFI issue as a very weak possibility. It's far more likely RFI will be a problem in the much larger surrounding circuitry and components that with the very tiny silicon die inside an op amp. Shielding just the die is like putting a postage stamp on a badly leaking roof.

But there's a more likely explanation and perhaps grain of truth to the claim: Thermal issues. If an op amp is driving a challenging load playing music the instantaneous temperature of the tiny silicon die is constantly changing. If the op amp's key parameters fluctuate significantly with temperature changes you can get some degradation in the performance from thermal modulation. And, sometimes even worse, in a dual op amp servicing both channels the music in one channel modulates the thermal performance of the other channel. In that circumstance a metal package tends to keep the die at a more constant temperature and you might get slightly better performance.

I've met and talked with one of National Semi's former audio application engineers about this very issue and his view was it's much more a problem with poorly designed op amps or op amps being used in the wrong application. I agree with him. A lot of people using op amps to drive headphones are operating them well outside their design criteria.

The critical gain stage of the O2 amp runs at a constant temperature so thermal issues are not an issue. The output stages are much less critical as unity gain buffers. The parameters that may drift slightly with temperature are unlikely to be an issue in such a buffer stage with relatively high signal levels. Plus the O2 uses a separate device for each channel which not only doubles the output dissipation lowering the temperature of each device but also prevents one channel from thermally modulating the performance of the other.

You can measure THD+N at 5hz or 10hz driving the desired load at various output levels and usually see clear signs of any thermal modulation issues. A sine wave at those frequencies typically creates even greater thermal swings than most music does. The O2 passes the test with flying colors. But a single stage amp, like the Mini3 or a Cmoy, may not. It depends on the thermal characteristics of the op amp used.

So, to summarize, if you're using an op amp within its design criteria, and it's designed for high performance audio use, and especially if you're not sharing channels within an op amp, it's unlikely the package will make any measurable or audible difference. If you're using say a video buffer op amp in an audio application, or using a load impedance lower than recommended, you need to test for thermal modulation.

What do you think of the NJM2114D as a buffering OpAmp? My hypothesis is that it's a pretty good OpAmp, because both the Titanium HD by Creative and the Xonar STX by Asus use it for buffering. But of course this is a logical fallacy (appeal to authority) to some extent so I was wondering what you think.

ReplyDeleteBTW thanks for your response about TO-99 OpAmps, very informative. :) My Titanium HD uses two single OpAmps so I guess temperature is not going to be a big issue.

Thanks again. :-)

The NJM2114D is similar to the 5532 so it should make a fine buffer. You could use either one.

ReplyDeleteHello, I've been looking around for a good opamp or opamp+buffer option for a portable SMD amp I'm planning. Reading through your articles I see you've chosen the 2277 and the 2062 as an opamp and buffer. If I were to design an amp around these, would it be necessary to use two 2062s for output buffering? Would using only one defeat the purpose of a buffer? (Something about unity gain allowing for better current amplification compared to having both in one opemp?) - Planning to use the amp for DT880/600s and RE0 64 ohm IEMs but I may want to release it for public use once finished.

ReplyDeleteI don't want to go into too many details about the amp design here, but I might PM more info if you were interested.

Thanks for the great articles!

If you haven't already, you might want to read up on my comments in the O2 Design and O2 Details articles on the 2062. It performs notably worse in every way than NJM4556 (except power consumption). Into the 600 ohm DT880 I might be more tempted to use a 5532 if you can't get the NJM4556. And because the RE0 shouldn't need much output, and the 880/600 doesn't need much current, you can live with a single output buffer per channel.

DeleteThe O2 Design article also explains the many benefits of a two stage design.

My main reason for choosing the 2277 and 2062 were for the much lower current usage. Since this is project is aimed for portability and low power consumption, the less current it draws, the better. As you said though, the noise is still better than almost all amps (esp. in a portable amp. The only concern is the increasing THD with low impedance, which I can't really visualize because your graph only graphs 15ohm and 150ohm, which have a 10x difference; pretty substantial.

DeleteIt'd be great to go with the NJM4556, but running from a 3.7v/1400mAh (Li-Ion) battery boosted to +/-7v with a LM3471 that has ~75% compounded efficiency isn't going to leave me with much power leeway.

"Slew Rate – Here’s another huge myth: The faster the slew rate the better. But the fastest “slew” you can get from a 16/44 digital recording has a period of about 22 uS. So the O2, worst case, needs to slew 20 volts in 22 uS or a slew rate of 0.9 V/uS."

ReplyDeleteThis is not entirely correct in the article, since the analog signal is not reconstructed by connecting the samples with straight lines. The worst case slew rate of a pure sine wave (at the zero crossings) is π * Vpp * f, or 3.14159 * 20 Vpp * 0.02205 MHz = 1.385 V/us with CD format audio and "ideal" DAC filtering.

Thanks. You're correct. I was trying to keep the math simple ;) But the rules of thumb for slew rate still apply, and those who think 30 V/uS will sound better and "faster" than 5 V/uS are still just as mistaken when their music can't slew faster than 1.4 V/uS and, in reality, even that value is extremely unlikely (a 0 dBFS 22Khz signal).

ReplyDeleteAny thoughs on OPA 2111, I'd like to try it on mCmoy v2.03

ReplyDeleteHi NwAvGuy,

ReplyDeleteWhat Op Amp is suitable for i/v conversion?

Thanks

There are many. I would suggest using the op amp the DAC manufacture suggests or uses in their reference design.

DeleteWould have liked to have seen the specs for, say OPA 627's

ReplyDeleteThe point of the article was to show how similar many op amps are, when properly used, and how other aspects of the complete design can mask differences (in this case the output stage of the O2).

DeleteMany have some favorite op amp they think magically sounds better, but, used properly, they all sound the same. The OPA627 is obscenely expensive, yet in 99% of properly designed audio applications, will sound exactly the same as the 5532 in blind testing.

If you're going to chain together 50 op amps in series then the OPA627 *might* sound better than a cheap 5532. Otherwise forget it.